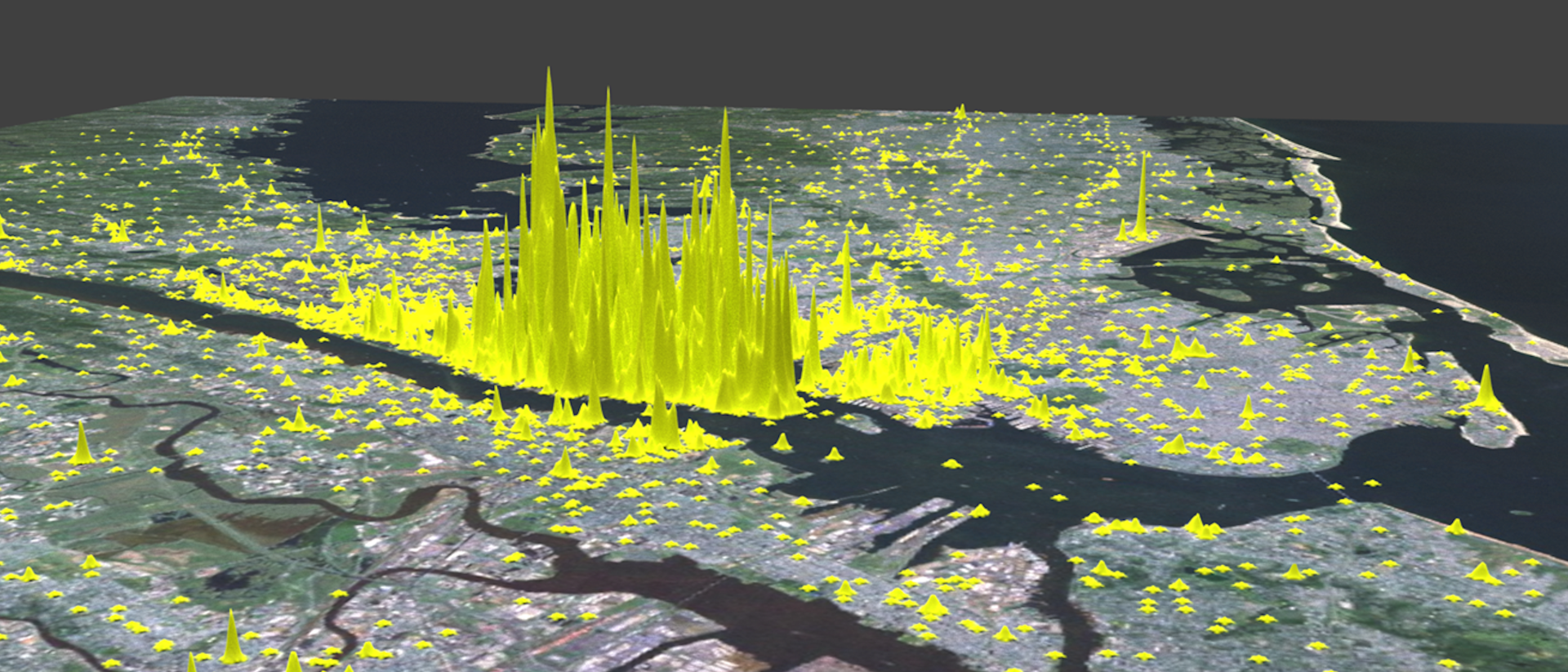

- Average Sentiment of Twitter Messages around New York.2017, M. Werner

Research of the professorship for Big Geospatial Data Management!

Big Geospatial Data - Overview

- Selected aspects of Big Geospatial Data(c) 2020 M. Werner

The research of the professorship for Big Geospatial Data Management covers all aspects of geometric as well as geospatial big data. As it is represented in the figure, examples of such data come from

Within this environment we design and implement new

- spatial algorithms and data structures,

- infrastructure for big geospatial data,

- methods in machine learning and dependable artificial intelligence and

- methods in computational geometry and topology

We provide our research to the public in the form of articles as well as lectures and are always in dialogue with politics, business and the society. Furthermore, we pursue an open-source strategy such that our results can be applied by other scientists as well as by the public easily.

Technology and Algorithms

The professorship is concerned with technology and algorithms in the form of software and hardware in the field of geospatial data processing. FPGAs, GPUs and other accelerators play an increasingly important role, but classical aspects such as the I/O behavior of algorithms are as important. Besides the obvious distinction of asymptotic complexity classes, we focus on aspects of real-world performance, which can only be determined by measurement on concrete hardware. As computing platforms, we consider the whole range of embedded SoCs, smartphones and desktop PCs up to cloud clusters and HPC infrastructures. All scales of computing devices are relevant as some mass data can only be processed in cloud or HPC environments, while spatial data acquisition can put tight bounds on energy consumption or size of computing devices.

Spatial Algorithms

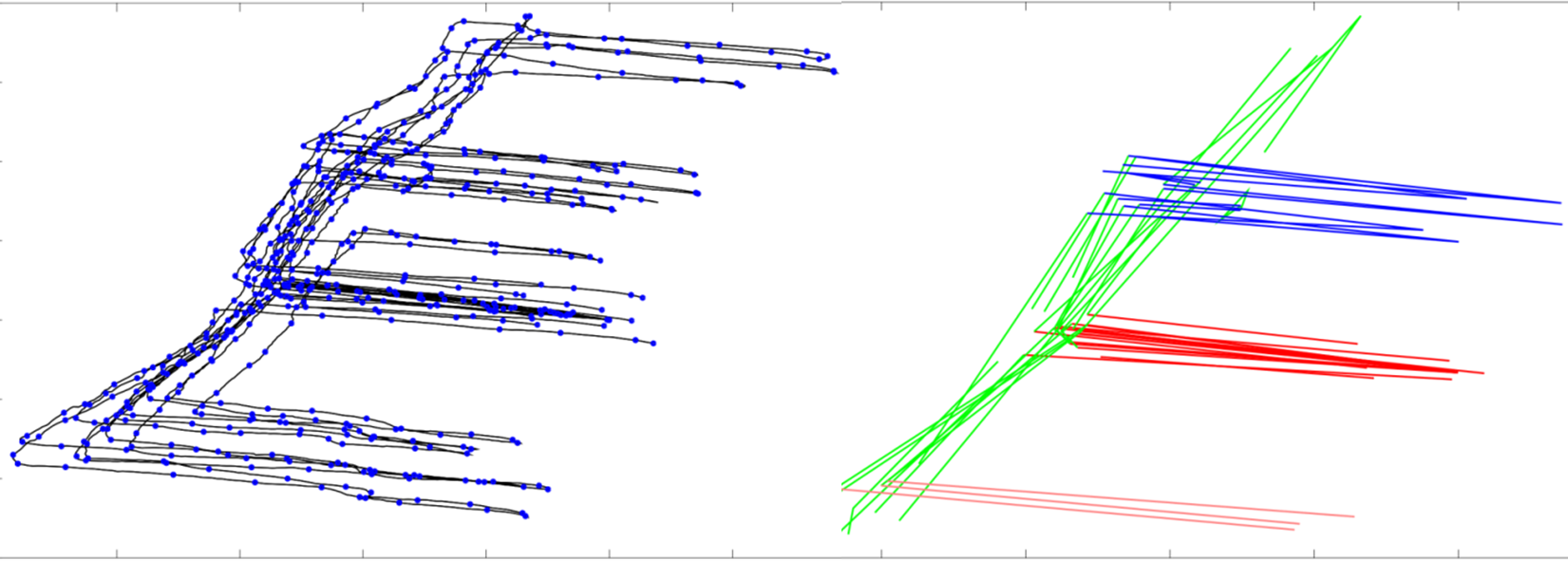

- Sample of a spatial algorithm – detailed and highly noisy motion data from a step counter are automatically broken down into building parts.(c) 2015 M. Werner

Spatial algorithms are a family of algorithms exploiting the rules of geometry and topology to infer knowledge. Many of these algorithms suffer from computational complexity and, therefore, it is vital to provide excellent implementations and insightful approximations to allow spatial algorithms to work on big data.

Typical algorithms of interest include those that are detecting “Elements of Interest” including spatio-temporal hotspots, cliques, spatial decompositions, shapes from point clouds, and alternative routes in planning and optimization.

Big Geospatial Data Infrastructure

- Social media combined with satellite data over Europe rendered using RayTracing and physical materials(c)2017 M. Werner

Big Data is certainly perceived today as a fairly mature technology and is widely used in business and science. However, the inherent properties of geometric data lead to difficulties not yet well-covered by big data infrastructures. These include the fact that for spaces with more than one dimension a reasonable order does not exist and therefore e.g. the distribution of data across different nodes in a distributed system is much more complex than it is for sortable data sets. In addition, the uncertainty of location information is a particular challenge: In practice, almost every calculation with geospatial data includes all nearby data items. Depending on the size of this environment, this means that these environments must be stored in a redundant way across a cluster or that algorithms must rely on data from other nodes during execution. In this context, we are working on data structures, distributed algorithms, and distributed database management systems enabling the efficient management of geospatial data on large scales.

Machine Learning and Dependable Artificial Intelligence

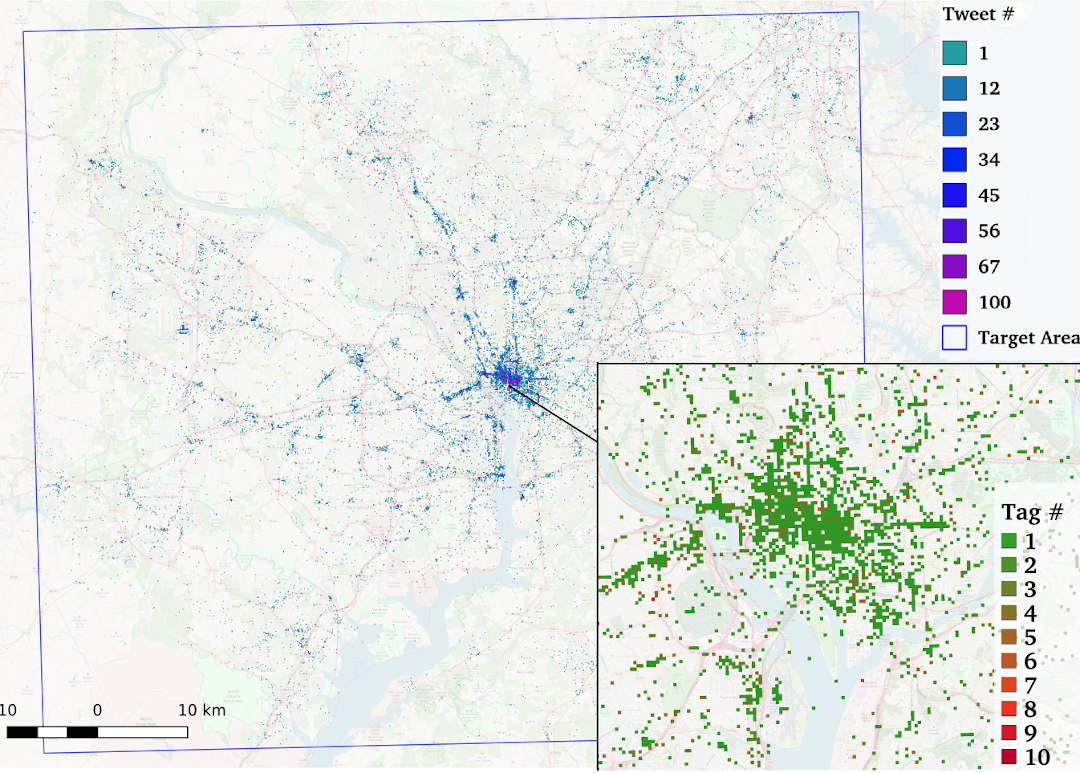

- Example for an augmentation of a machine learning task with data from another domain: The estimation of local climate zones was significantly improved with social media data.(c) 2017 ICAML

Knowledge discovery, statistical modeling, big data, data mining, data science, machine learning, and artificial intelligence are closely related terms, where all deal with different aspects of the question of the extraction of knowledge from data. In this environment, we deal with the special field of knowledge extraction from data with a relation to geometry and geographic space. In this context, we deal with credibility of artificial intelligence systems. This includes questions of explainability (“Explainable AI”), dependability (“Dependable AI”) and real-time capability (“Real-Time AI”).

In contrast to many domains where artificial intelligence is already used very successfully, the limitation with geographic data stem from the high dimensionality and semantic complexity of the observations combined with only little training data. Thus, the desired statistical distribution of a problem is rarely encoded in the training data set. Therefore, methods have to be created that can be used without training data (unsupervised machine learning), with poor training data (weakly supervised), with training data from other domains (transfer learning) or that use a direct involvement of humans (active learning, citizen science).

Geometry and Topology in Spatial Data Science

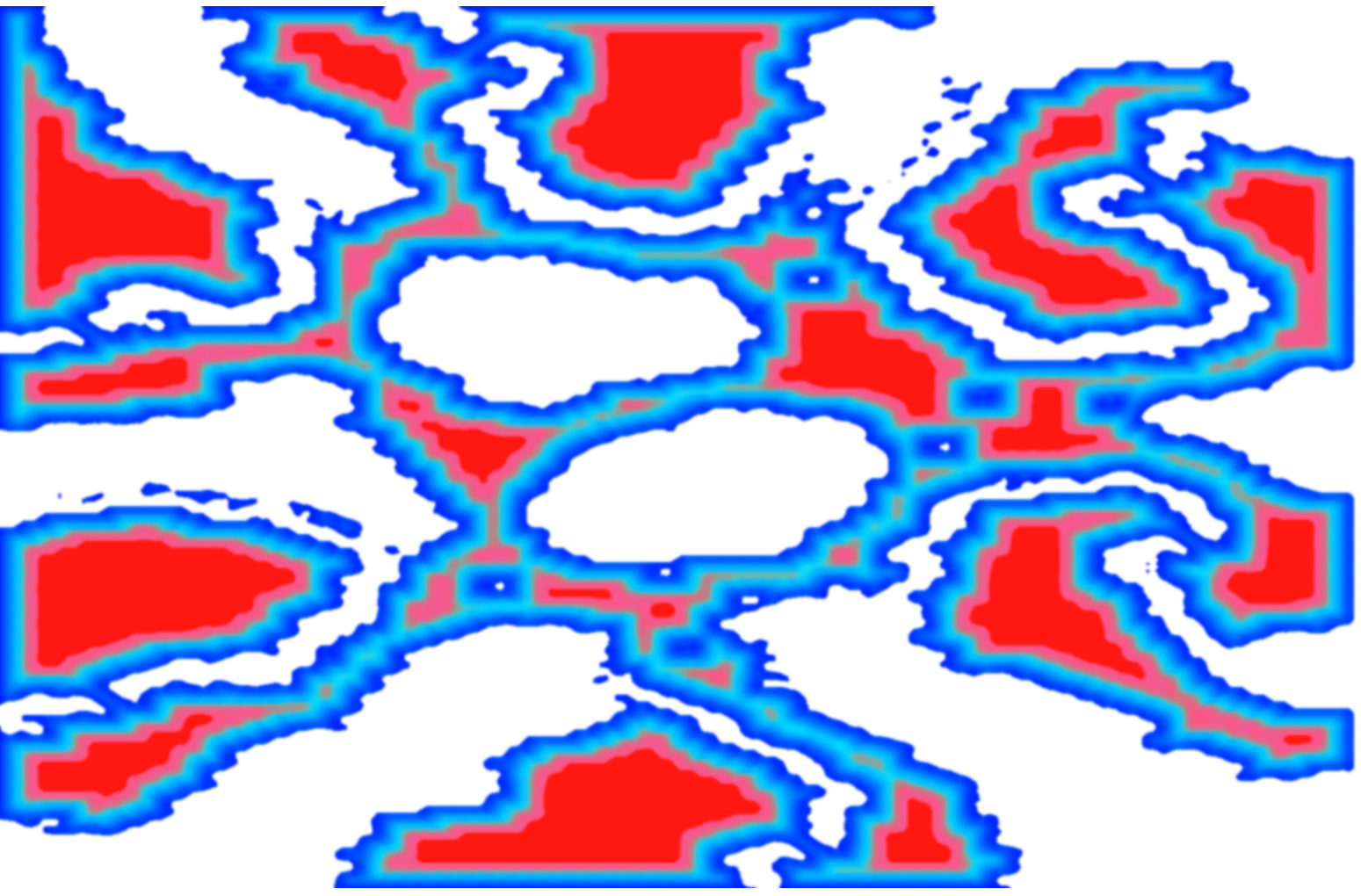

- Space decomposition with shrinkage persistence - a data-driven definition of subspaces(c) 2018 M. Werner

Geometry and topology are two essential concepts for understanding spatial data. In geometry, space is measured, usually by a distance function and dimensions derived from it. This includes database queries for k nearest neighbours (kNN), clustering algorithms such as k-means, in which centres are moved iteratively to represent data as good as possible, and complex computational geometry algorithms such as the determination of the Fréchet distance. Topology, in contrast to geometry, is more concerned with modeling rough relationships, e.g. by neighborhoods in a graph or by considering geometric properties that remain constant under deformation. This reduced selectivity of topology often fits very well with human concepts of space.

Data types and data sources

Big Data is often defined as 3V: Volume, Velocity, Variety, where volume describes the amount of data, velocity the speed at which this data arrives, and variety the variety of formats and standards underpinning given data. The following sections give an impression of the diversity of Big Geospatial Data.

Remote Sensing

- Sentinel-2 Image from the area of Munich(c) 2019 M. Werner, mit Daten des Copernicus-Programms der ESA

Remote sensing is used to observe things from a distance, often using airborne platforms (aircrafts, drones) or space platforms (satellites, ISS). We work with experts in this field to process such data effectively and efficiently. These data provide very high quality observations all over the world with increasing frequency and resolution and, therefore, are particularly suitable for investigations in the fields of climate research, urbanization research, agriculture and other macroscopic observations.

Of particular interest are algorithms that process and compress the incoming data in such a way that a maximum of information can still be extracted with the methods of data mining or machine learning with minimum memory consumption. Without such algorithms, the quantity of data from such satellites goes beyond the scope of economic usability. Among other things, we are working here on information-theoretical methods to perform common tasks such as cloud detection, change detection and classification with the greatest possible spatial storage efficiency.

HD-Maps und GIS

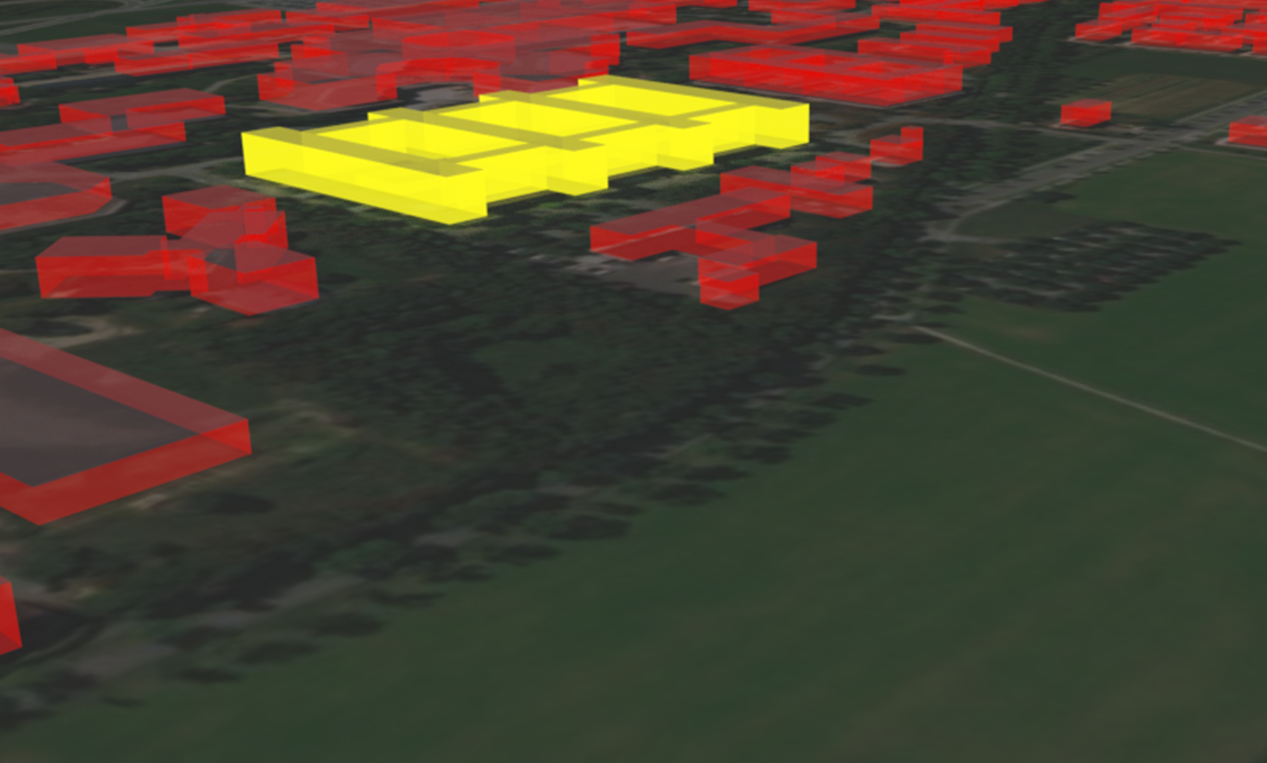

- High-Resolution GIS information in interactive application GeoDialog(c) 2020 M. Werner, mit Daten von ESRI und OSM

It is not only with the advent of the vision of autonomous driving that the accuracy, level of detail and resolution of available map information is increasing. We summarize this development, which includes both measured map information (3D point clouds in autonomous driving, image collections, etc.) and modeled map information (OSM, ATKIS, INSPIRE, etc.) under the topic of HD maps. In this area we are particularly interested in the structuring of such data for analysis and distribution, because this map information is usually needed in a mobile context, where only limited computing and communication resources are available. We as well apply such data in positioning, navigation, planning and simulation to get the most realistic perspectives.

Social Media

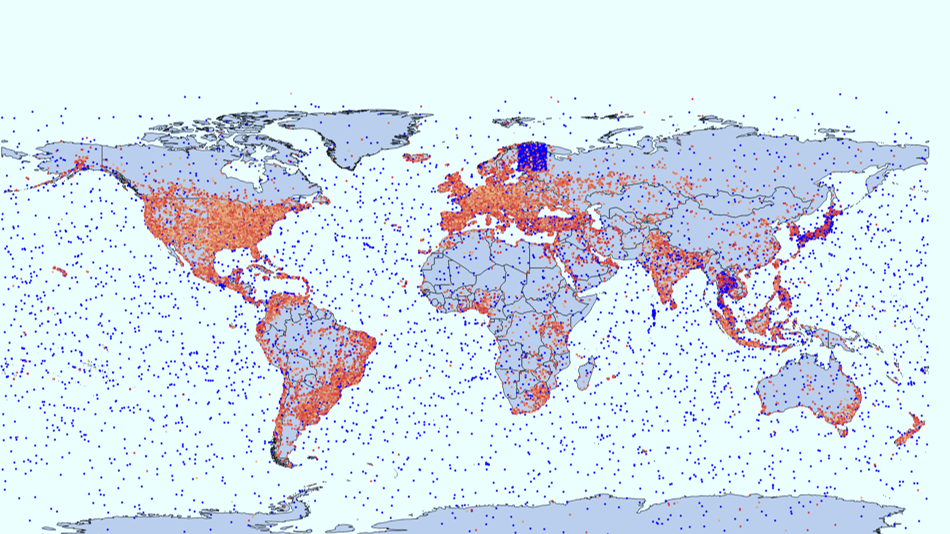

- Social Media Bot Detection - Reject a significant amount of noise from Twitter(c) 2019 M. Werner; Data courtesy of Twitter Inc.

Social media provide very large quantities of georeferenced observations and this almost in real time. From such data, events, hotspots (trends) and anomalies can be identified. Furthermore, a spatio-temporal signal can be extracted over longer periods of time, which correlates strongly with socio-demographic factors and behaviors.

Such a covariate can then be used together with other data to introduce additional selectivity into a data-driven analysis based on measurements. We use such data in research for the resolution of ambiguities, as input for simulations, and for the analysis of microscopic and macroscopic sensor data related to the human population.

Movement Data

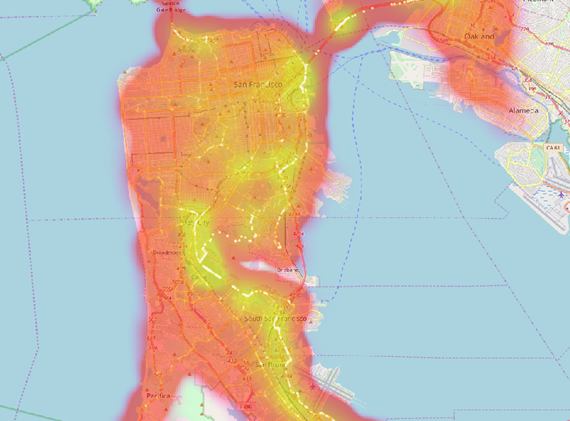

- Mobility analysis in San Francisco shows expected traffic load in the area(c) 2018 M. Werner

Movement data is a very useful resource of spatial knowledge. This is because movement manifests the daily routines in cities, the movement of goods and commodities, the social behavior of wild animals and many other aspects that we want to understand. However, movement data are also particularly difficult to process. In this context, our task is to provide application-ready algorithms for the analysis of movement data that combine correctness, validity and efficiency. This area also includes aspects of motion detection, e.g. for indoor positioning and for comparing movements in complex spaces (e.g. in constrained free space). For this field, we provide a collection of the most important algorithms as a library for research and application in addition to individual methods in research with libTrajcomp.

Contact

Big Geospatial Data Management

Lise-Meitner-Str. 9

85521 Ottobrunn

martin.werner@tum.de

Getting to us...